Tony Davies Columns

Tony considers the “Life and death of a data set: a forensic investigation”. Over time, spectral data will become increasingly fragmented and lose important supporting information. Computer and software upgrades, and processing in chemometric programs can all cause this. Of course, the answer is to follow FAIR principles and ensure that they are implemented in the analytical laboratory.

This is the second part of an interview with John Hollerton, the first part in is the last issue! John recently retired from a long career at GSK and we took this opportunity to have a chat with him, as Mohan Cashyap and our beloved editor Ian Michael both have had the opportunity to work with John on projects and on the LISMS conference (Linking and Interpreting Spectra through Molecular Structures). Here, we move the discussion on to technologies and innovation, the great, the over-hyped and the effectively lost to the modern analytical laboratory.

Tony and Mohan Cashyap interview John Hollerton, who has just retired after a career of over 40 years at GSK (and its many previous names). John has been responsible for many aspects of analytical chemistry at GSK. As Tony says, he is “an innovative ideas man with some interesting stories”.

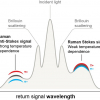

This article describes a really interesting use of spectroscopic data processing from optical fibre cables.

Tony Davies has started a timeline of significant spectroscopic system developments aligned with Queen Elizabeth’s reign as recently celebrated in her Platinum Jubilee. Jumping from Princess Anne the Princess Royal’s birth to Heinrich Kaiser certainly makes for a novel approach! Tony hopes that we can turn this into an online resource with your help.

This column starts to answer the question, “how does one actually find FAIR data?” with a detailed example from Imperial College London.

Tony Davies marks the passing of Svante Wold, who gave us “chemometrics”. It all started with a grant application!

Tony Davies has discovered there is a new UK National Data Strategy and that it is on the right lines: echoing many of his suggestions in this column over many years.

COLID is a Finding Aid, essentially a “catalogue of catalogues” collating any data source with which it is connected. It collects and provides metadata about basically any resource that you want to incorporate, links endpoints, such as spectra, in a repository or details in a chemicals database, and links it all semantically with any other related resource.

Tony Davies and Luc Patiny introduce us to free online NMR data processing tool in “NMRium browser-based nuclear magnetic resonance data processing”. They run through the background to the project, how it works and how you can try it yourself. There is a video introduction and an online demo page where you can play with different scenarios.

Following our articles on the FAIR initiative, we now look at some examples of the FAIRification of data handling, collection and archiving.

The Tony Davies Column offers a challenge to us all with another contribution on FAIR data, which should be Findable, Available, Interoperable and Readable. It is clearly the way we should all be going, everybody from manufacturers and software developers, through researchers to publishers needs to work together.

Following on from a recent column that reported on work which had shown that weight fractions were often incorrect concentration units to use in quantitative chemometric studies, Howard Mark goes into more detail.

Peter McIntyre and Tony Davies remember Bill George, a real Welsh character and educator whose style and charisma influenced many to go on and not only stay in science but to rise to leading positions either in industry or academia.

In quantitative analysis, is it better to weigh materials when making up standard solutions or to use volumetric techniques? Traditionally, the answer has been “volume”, however, things may not be as straightforward as they seem. Henk-Jan and colleagues have conducted a new experiment, using robots for both sample preparation and spectroscopic analysis which may provide a definitive answer. Unfortunately, the answer must wait for publication of their paper, but Tony and Henk-Jan’s history of this question makes interesting reading nevertheless.

Whilst automation is not a panacea, it can improve the accuracy of manual tasks as well as freeing up our time for more challenging tasks. The authors explore some particular examples they have come across and lessons learned from them.

Tony Davies and Mohan Cashyap discuss this topic with help from a number of industry experts. Whilst there are undoubted computing and networking issues for regulated industries in allowing working from home as if the user was in the lab, they are not insurmountable.

With a significant proportion of our regular readership probably under home lock-down, we were wondering if we could help you at this difficult time by pointing out some useful online resources. So, when we finally come out of this pandemic, you could do so better skilled and more up-to-date than when we went in to it.

Tony and Lutgarde Buydens give us an update on the planning for the major EuroAnalysis 2021 conference, which is being held in Nijmegen, the Netherlands, at the end of August 2021. At this stage, they are keen to gather suggestions from readers on topics they would like to see covered. Groups are also invited to consider hosting their own event under the EuroAnalysis 2021 banner.

The authors offer many useful points to consider when using pre-processing techniques.

The Tony Davies Column covers a wide range of topics of interest to spectroscopists in both industry and academe, with an emphasis on data handling and processing. Read more about the

The Tony Davies Column covers a wide range of topics of interest to spectroscopists in both industry and academe, with an emphasis on data handling and processing. Read more about the